Author: Andrea Manero-Bastin

This article was written by Marek Galovič.

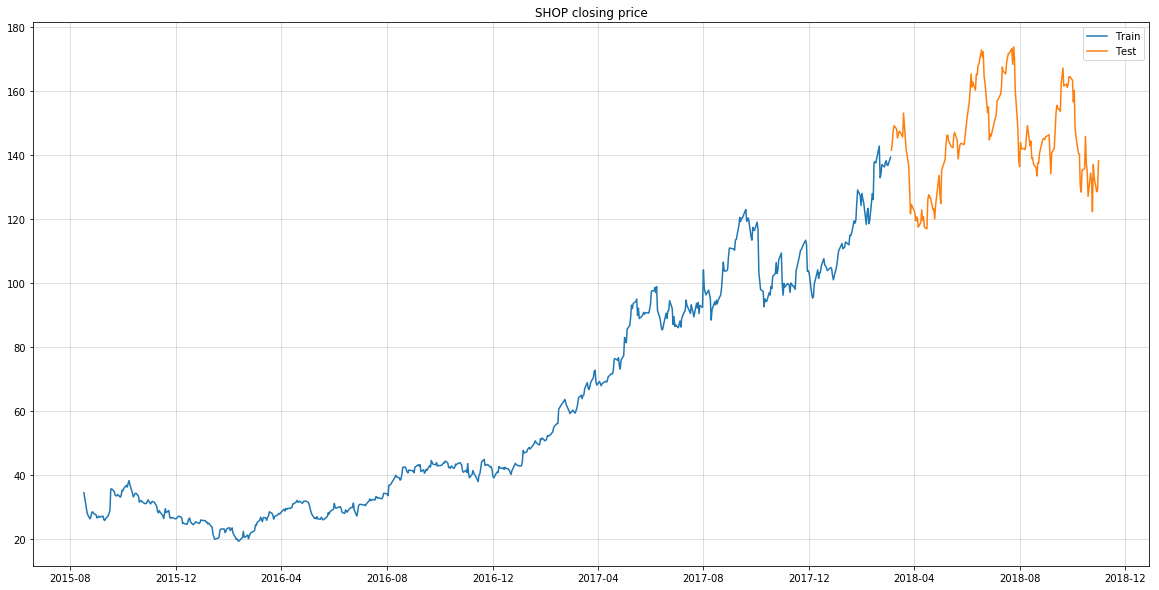

In this article I want to give you an overview of a RNN model I built to forecast time series data. Main objectives of this work were to design a model that can not only predict the very next time step but rather generate a sequence of predictions and utilize multiple driving time series together with a set of static (scalar) features as its inputs.

Model architecture

On a high level, this model utilizes pretty standard sequence-to-sequence recurrent neural network architecture. Its inputs are past values of the predicted time series concatenated with other driving time series values (optional) and timestamp embeddings (optional). If static features are available the model can utilize them to condition the prediction too.

Encoder

Encoder is used to encode time series inputs with their respective timestamp embeddings to a fixed size vector representation. It also produces latent vectors for individual time steps which are used later in decoder attention. For this purpose, I utilized a multi-layer unidirectional recurrent neural network where all layers except the first one are residual.

In some cases you may have input sequences that are too long and can cause the training to fail because of GPU memory issues or slow it down significantly. To deal with this issue, the model convolves the input sequence with a 1D convolution that has the same kernel size and stride before feeding it to the RNN encoder. This reduces the RNN input by a factor of n where n is the convolution kernel size.

Context

Context layer sits between the inputs encoder and a decoder layer. It concatenates encoder final state with static features and static embeddings and produces a fixed size vector which is then used as an initial state for the decoder.

Decoder

Decoder layer is implemented as an autoregressive recurrent neural network with attention. Input at each step is a concatenation of previous sequence value and a timestamp embedding for that step. Feeding timestamp embeddings to the decoder helps the model learn patterns in seasonal data.

At the first step, encoder takes the context as an initial cell value and a concatenation of initial sequence value and first timestamp embedding as a cell input. First layer then emits attention query that is fed to attention module which outputs a state that is then used as a cell state in the next step. Lower layers of the decoder don’t use attention. Outputs of the decoder are the raw predicted values which are then fed to the next step together with a timestamp embedding for that step.

Attention

Attention allows the decoder to selectively access encoder information during decoding. It does so by learning a weighting function that takes previous cell state and a list of encoder outputs as an input and outputs a scalar weight for each of the encoder outputs. It then takes a weighted sum of encoder outputs, concatenates it with the query and takes a nonlinear projection as a next cell state.

To read the whole article, with mathematical formulas, click here.