Author: Jesus Rodriguez Using continual learning might avoid the famous catastrophic forgetting problem in neural networks. Go to Source

News, Tutorials & Forums for Ai and Data Science Professionals

Author: Jesus Rodriguez Using continual learning might avoid the famous catastrophic forgetting problem in neural networks. Go to Source

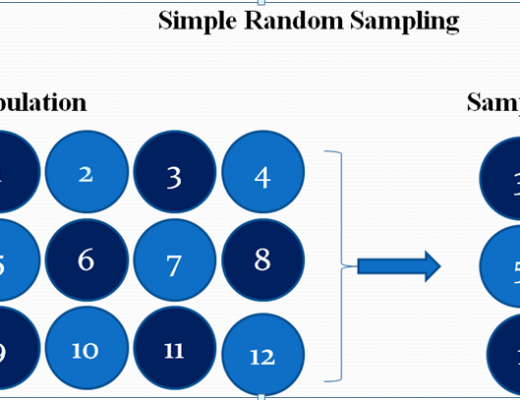

Author: Muhammad Touhidul Islam Image Source: Statistical Aid: A School of Statistics Simple random sampling Simple random sampling is considered the easiest and most popular method of probability […] Read More

Author: Google AI launches Crowdsourcing Adverse Test Sets for Machine Learning (CATS4ML) Data Challenge MarkTechPost Go to Source

Author: Jason Brownlee Stochastic optimization refers to the use of randomness in the objective function or in the optimization algorithm. Challenging optimization algorithms, such as […] Read More

Author: Matt Mayo Editor Linear algebra is the branch of mathematics that studies vector spaces. You’ll see how vectors constitute vector spaces and how linear […] Read More

Author: New Machine Learning Theory Raises Questions About the Very Nature of Science SciTechDaily Go to Source

Author: Matt Mayo Editor Advance your data science career with Northwestern. Build the essential technical, analytical, and leadership skills needed for careers in today’s data-driven […] Read More

Author: Varun Bhagat We live in an information age where the world of almost every organization revolves around data. Big Data is a field that […] Read More

Author: Jason Brownlee It can be challenging to develop a neural network predictive model for a new dataset. One approach is to first inspect the […] Read More